In my last post I introduced construct validity. Here I will talk more about some specific aspects of construct validity.

I previously mentioned “unidimensionality,” and there may be some people out there who would like to know what this terms means. In the assessment realm, unidimensionality refers to the measurement of one psychological dimension/trait/construct/attribute/skill/ability/etc. For example, if we have an assessment that is designed to measure the construct “math ability” we would want to ensure that all the questions in the assessment measure this construct and only this construct.

One can investigate the degree to which an assessment is unidimensional by using some well known statistical analysis methods such as principal component analysis (PCA) or factor analysis (FA) to confirm/explore what dimensions each question in the assessment loads onto. I have done a few of these in my day using software like SPSS and I can tell you from firsthand experience, they are a ton of fun.

Unidimensionality is an important assumption in a number of areas of psychometrics and has implications for statistics like internal consistency reliability (e.g., Cronbach’s Alpha will be maximized when all items are measuring the same construct) and the interpretation of participant scores (e.g., if an assessment is measuring a random smattering of dimensions then what does a participant score really mean?).

Here’s how this concept of unidimensionality fits in with the concept of construct validity: If an assessment is designed to measure only one dimension/construct, this should actually be the case, and this design assumption can be investigated/confirmed. If an assessment is composed of four topics, each of which is designed to measure only one dimension, then this can be investigated/confirmed. In other words, the dimensionality assumptions about the measurement of the construct(s) composing the assessment should be validated, for example using methods like PCA or FA.

Moving on to the details of convergent and discriminant validity, these two things are in some ways flip sides of the same coin. With convergent validity, we are seeing whether our math ability test correlates with other well known math ability tests that are being used. With discriminant validity, we are seeing whether our math ability test does not correlate with well known tests out there that measure something different than math ability, like verbal ability.

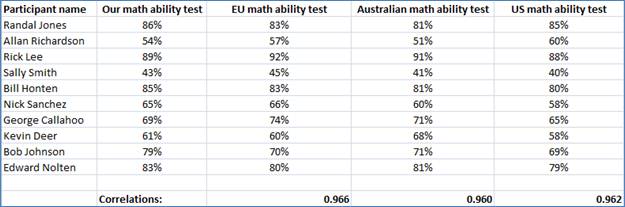

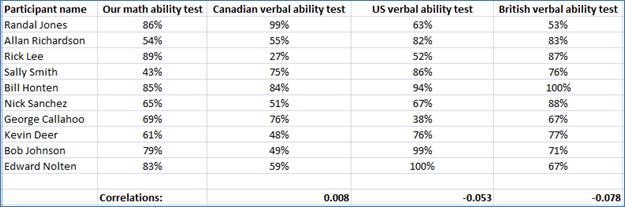

Using our trusty statistical analysis program, Excel, we can conduct a mini convergent and discriminant validity study. We had the same ten participants take four math ability tests for the convergent validity study: Our math ability test and three other well known tests out there. For the discriminant validity piece we also had the same ten participants take three well known verbal ability tests.

To investigate convergent validity we correlated the assessment scores from Our math ability test with scores obtained from the three other math ability tests and we found high correlations with each, for example the correlation between Our math ability test and the EU math ability test is 0.966, the correlation between Our math ability test and the Australian math ability test was 0.960, and the correlation between Our math ability test and the US math ability test was 0.962:

To investigate discriminant validity we correlated the assessment scores from Our math ability test with scores obtained from the three verbal ability tests and we found very low correlations with each, for example the correlation between Our math ability test and the Canadian verbal ability test was 0.008, the correlation between Our math ability test and the US verbal ability test was -0.053, and the correlation between Our math ability test and the British verbal ability test was -0.078:

In terms of nomological validity, this is something that needs to be addressed in situations where new research is being conducted into an existing construct or new construct. We would want to ensure that current research is supported by previous research. If a completely new construct is being proposed we would need to justify how this construct is similar and different to other construct research that has been done.

In my next post I will discuss modern perspectives on validity.