Discover how our AI Scoring tool works to save SME time and deliver faster learner feedback with automated and rubric-aligned scoring.

Blog

From assessment best-practice to the power of certifications, our blog has a range of topics built with you in mind

Explore everything

Why human oversight in AI-based assessments matters for bias, trust, and accuracy

AI-powered assessment is useful but must have human oversight so stakeholders can trust test scores for essential people decisions.

Why human oversight in AI-based assessments matters for bias, trust, and accuracy

Building the next generation of IT certifications: A CPO’s perspective

Discover insights on how to build a successful IT certification program in a world of skill gaps, AI, & challenges with scaling practical tests.

Building the next generation of IT certifications: A CPO’s perspective

L&D in 2026: from traditional to transformative

The world of Learning and Development (L&D) is built on change, so how do you stay ahead in 2026?

L&D in 2026: from traditional to transformative

The anatomy of a successful certification program in an AI world

Join Questionmark’s Chief Product Officer, Neil McGough, as he discusses the challenges in creating a successful certification program in an AI world…

The anatomy of a successful certification program in an AI world

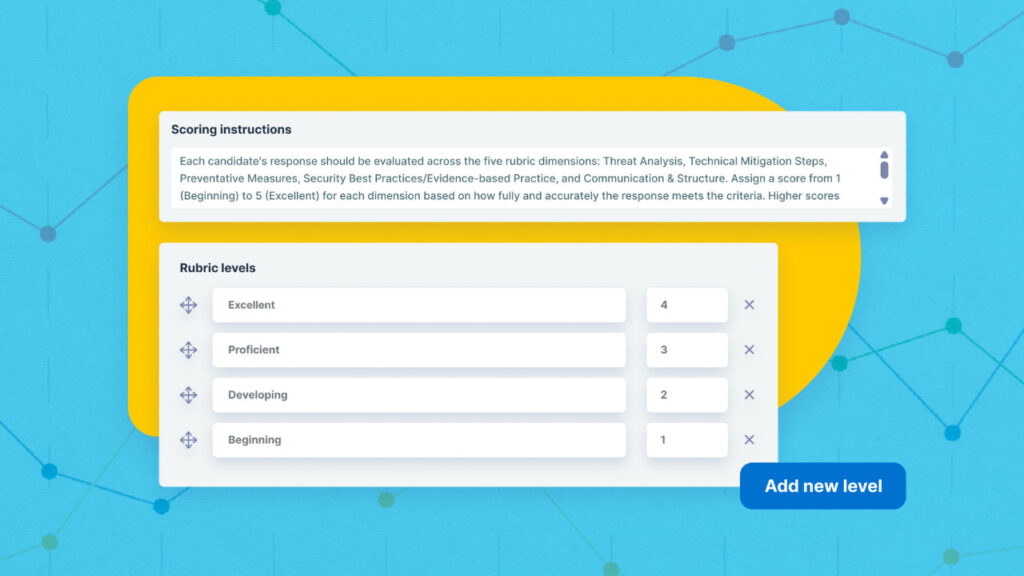

AI Scoring: How it works

Discover how our AI Scoring tool works to save SME time and deliver faster learner feedback with automated and rubric-aligned scoring.

AI Scoring: How it works

Bridging skill gaps in healthcare with scenario-based assessments

Discover how scenario-based assessments can close healthcare skills gaps using advanced skill-based testing methods.

Bridging skill gaps in healthcare with scenario-based assessments

Certification, scoring, and the AI powered future?

Is the future of certification AI-powered? Can it score as well as a human? And are the challenges worth the rewards?

Certification, scoring, and the AI powered future?

5 tools and strategies to successfully scale your certification programs

Scale your certification program using these 5 methods.

5 tools and strategies to successfully scale your certification programs

New standards for best-practice proctoring

Discover the brand new online proctoring standards that are set to shape the remote assessment landscape.

New standards for best-practice proctoring